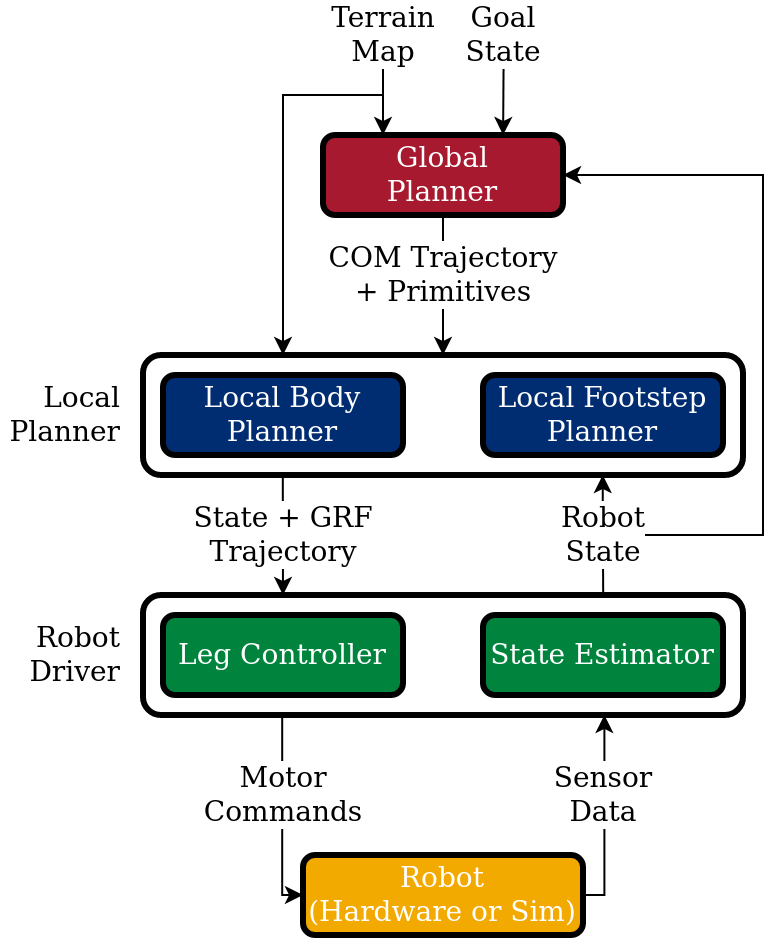

Architecture Overview¶

Quad-SDK is a layered, message-driven stack. Each layer is a ROS 2 package with clear input/output topics, so individual layers can be swapped or studied in isolation.

The hierarchy¶

flowchart TB

subgraph PERCEPTION[Perception]

TM[terrain_map]

end

subgraph PLAN[Planning]

GP[global_body_planner<br/>RRT-Connect + leaps]

LP[local_planner<br/>NMPC body + Raibert footstep]

NMPC[(nmpc_controller<br/>library)]

end

subgraph CONTROL[Control + estimation]

RD[robot_driver<br/>control loop]

EKF[state_estimator<br/>EKF]

BFE[body_force_estimator]

end

subgraph EXEC[Execution]

HW[hardware_interface]

SIM[Gazebo / MuJoCo / IsaacSim]

end

TM --> GP

TM --> LP

GP -- global_plan --> LP

LP -- local_plan --> RD

LP <-.uses.-> NMPC

RD -- joint_cmd --> HW

RD -- joint_cmd --> SIM

HW -- joint_state, imu --> EKF

SIM -- joint_state, imu --> EKF

EKF -- state/ground_truth --> LP

EKF -- state/ground_truth --> GP

EKF -- state/ground_truth --> RD

EKF -- state/ground_truth --> BFE

RD -- joint_cmd --> BFE

BFE -- external_wrench --> LPLayer summary¶

| Layer | Frequency | What it produces | Where it lives |

|---|---|---|---|

| Multi-robot coordination (optional) | per goal change | Conflict-free per-robot global plans | conflict_based_search |

| Global plan | 5–20 Hz | Path of body states + GRFs to a goal | global_body_planner |

| Local plan | 333 Hz | 26-step NMPC body plan + footstep schedule | local_planner |

| NMPC | called per local-plan tick | OCP solution (states, GRFs) | nmpc_controller |

| Control | 500 Hz | Per-joint torque/PD commands | robot_driver |

| Estimation | 500 Hz | Body state + foot contacts | robot_driver |

| External wrench | 100 Hz | Disturbance estimate | body_force_estimator |

| Simulation | 1–2 kHz | Joint states, IMU, contacts | quad_simulator |

For multi-robot scenarios, the conflict_based_search node sits above the per-robot global planners and coordinates them via the plan_with_constraints service. See the Multi-Robot tutorial.

Topic conventions¶

All robot-scoped topics are namespaced as /robot_<id>/<topic>. Multi-robot configs (see CBS demos) replicate the full stack under each namespace.

| Topic | Type | Producer → Consumer |

|---|---|---|

state/ground_truth |

quad_msgs/RobotState |

estimator → planners, driver |

global_plan |

quad_msgs/RobotPlan |

global → local planner |

local_plan |

quad_msgs/LocalPlan |

local planner → driver |

foot_plan_continuous |

quad_msgs/MultiFootPlanContinuous |

local planner → driver |

terrain_map |

grid_map_msgs/GridMap |

perception → planners |

cmd_vel |

geometry_msgs/Twist |

user → local planner |

control/mode |

std_msgs/UInt8 |

user → driver |

Full message definitions: quad_msgs.

Launch composition¶

The runtime is composed by launch files under quad_utils/launch/ rather than monolithic config:

# Single-robot

quad_gazebo.py ─┐

├── robot_bringup.py ── robot_driver.py

quad_mujoco.py ─┘ (per-robot)

quad_plan.py ──── planning.py ──── global_body_planner_node

├── local_planner_node

├── body_force_estimator (optional)

└── logging (optional)

# Multi-robot

quad_multi_gazebo.py ── robot_bringup.py × N (one per robot)

multi_robot.py ──── planning.py × N (per-robot stacks)

├── conflict_based_search_node (central coordinator)

└── logging_cbs.py × N (optional, --logging_cbs:=true)

Single-robot scenarios pass a robot_configs JSON list with one entry; multi-robot scenarios pass an N-robot list with each robot's goal_state field set. robot_bringup.py iterates and stamps namespaces. The CBS coordinator only runs in the multi-robot path. See:

quad_utils/launch/quad_gazebo.py(single)quad_utils/launch/quad_multi_gazebo.py(multi)quad_utils/launch/multi_robot.py(per-robot planning + CBS)quad_utils/launch/logging_cbs.py(per-robot CBS-debug bagging)

Module deep-dives¶

- ROS 2 migration — what changed from ROS 1, what to update if you're porting code.

- Pinocchio integration — how the kinematics/dynamics layer was rewritten.

Design choices worth knowing¶

Why NMPC as a library?

nmpc_controller builds a CasADi/IPOPT solver once at startup and exposes a synchronous solve(). The local planner calls it directly so we avoid the latency and brittleness of a separate ROS node round-trip per control tick.

Why split global vs. local planning?

Global search (~hundreds of milliseconds, RRT-Connect) and local refinement (~3 ms, NMPC) live on different timescales. Splitting them lets each be tuned and replaced independently.

Why three simulators?

Each backend earns its place. Gazebo Harmonic is feature-rich (sensors, physics plugins, fluid contact) and covers every platform. MuJoCo is fast, deterministic, and renders without a GPU — ideal for headless training rigs and perf tests. NVIDIA IsaacSim 5.1 (beta) brings high-fidelity rendering and GPU-accelerated scenes for the Spirit 40 and Go2 — it needs a separate IsaacLab conda install; see Running in IsaacSim. The controller and planning stacks run unmodified across all three. See the simulator support matrix.